Troposphere is a word-cloud rendering plugin built in Javascript on Canvas using Fabric.

https://github.com/scipilot/troposphere

It has a few artistic options such as the “jumbliness”, “tightness” and “cuddling” of the words, how they scale with word-rankings, colour and brightness. It also has an in-page API to connect with UI controls.

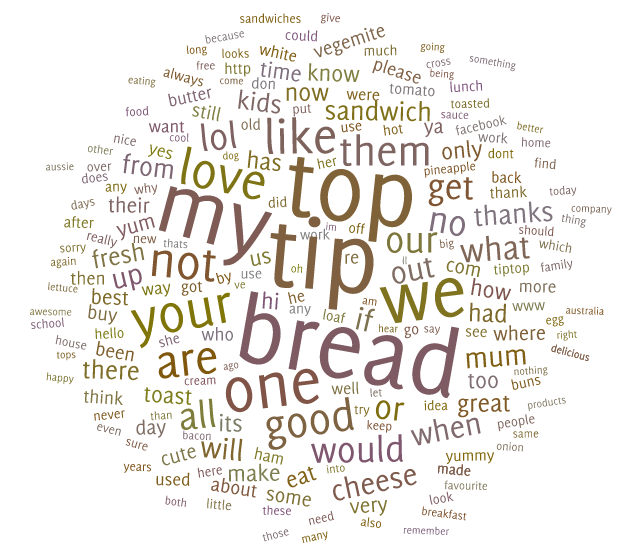

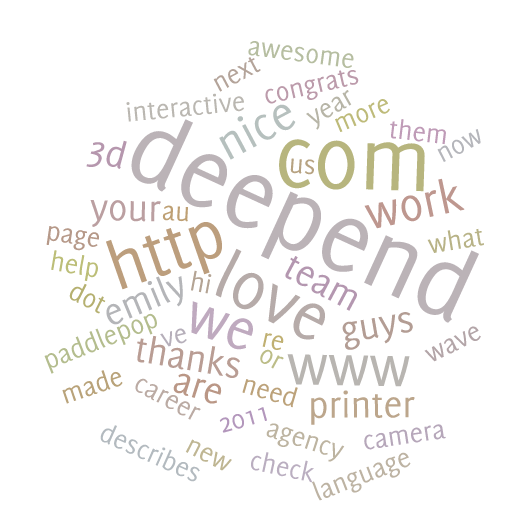

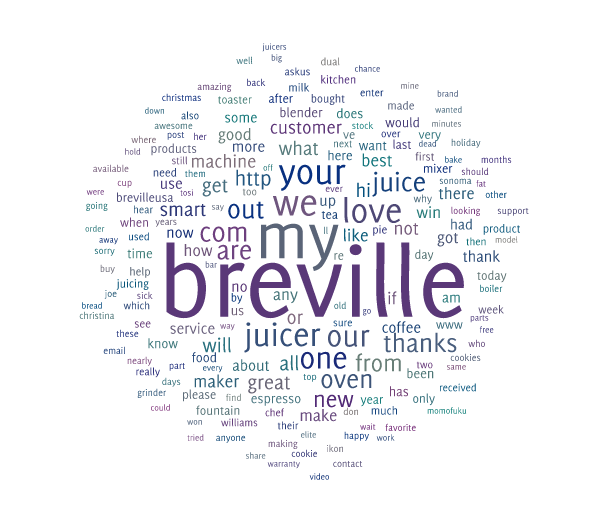

I developed this visualisation in my spare-time a few years ago, while I was building a product codenamed “Tastemine” which was originally conceived by Emily Knox, the social media manager at Deepend Sydney. This proprietary application was designed to collect “stories” – posts, comments and likes on our client’s Facebook pages with analytical options ranging from keyword performance tracking through to sentiment analysis. After scraping and histogramming keywords from this (and other potential sources), we added the option to render the result in Troposphere as a playful way to present the data visually.

While ownership of a page enables you to access much more detailed data, it’s surprising how much brand identity you can collect from public stories on company pages. We used this to great effect, walking into pitches with colourful (if slightly corny) renditions of the social zeigest happing right now on their social media. Some embarrassing truths and some pleasant surprises were amongst the big friendly letters.

While ownership of a page enables you to access much more detailed data, it’s surprising how much brand identity you can collect from public stories on company pages. We used this to great effect, walking into pitches with colourful (if slightly corny) renditions of the social zeigest happing right now on their social media. Some embarrassing truths and some pleasant surprises were amongst the big friendly letters.

I think the scaling of the word frequencies is one of the most compelling aspects of word clouds. Often I’d hear clients cooing over seeing their target brand-message keywords standing out in large bold letters. Whether it truly proves success or not is questionable, but the big words had spoken. We certainly used it to visualise success trends over time, as messages we were marketing became larger in the social chatter over time.

It was, at the time of writing, the only pure-HTML5 implementation, with visual inspiration taken from existing Java and other backend cloud generators such as Wordle which didn’t have APIs and so couldn’t be used programmatically. It was quite challenging to perfect, and still isn’t perfect at not crashing some letters together, and still takes some time to render large clouds. I made huge leaps in optimisation and accuracy during development, so it may be possible to further perfect it with improved collision detection and masking algorithms.

It was built in the very early days of HTML5 Canvas when we were all lamenting the loss of the enormous Flash toolchain. It felt like 1999 all over again programming in “raw” primitives with no hope of finding libraries for sophisticated and well-optimised kerning, tweening, animating, transforming and forget physics. These were both sad and exciting times – we were in a chasm between the death of Flash and the establishment of a proper groundswell of Javascript. At the time Fabric was one of the contenders for a humanising layer of the raw Canvas API, handling polyfills and normalisation plus a mini-framework which actually had all sorts of strange scoping quirks.

One of my dear friends, and at the time rising-star developers, Lucy Minshall was suffering more than most from the sudden demise of Flash – being a Flash developer. I chose this project as training material for her as it was a good transition example from the bad old ways of evil proprietary Adobe APIs to the brave new future following the Saints of WHATWG. It also contained some really classic programming problems, difficult maths and required a visual aesthetic – perfect for a talented designer-turned Flash developer like Lucy. Who cares what language you’re writing in anyway – its the algorithms that matter!

The most interesting and difficult part of the project was “cuddling” the words, as Lucy and I came to call it with endless mirth. This was the idea of fitting the word shapes together like tetris so they didn’t overlap. Initially I implemented a box-model where the square around each glyph couldn’t intersect with another bounding-box. That was easy! Surely it wouldn’t be so hard to swap that layout strategy for one that respected the glyph outlines?

While I can’t remember all the possibilities I tried (there were lots, utter failures, false-starts, weak algorithms and CPU toasters) a few of the techniques which stuck are still interesting.

The main layout technique (inspiration source sadly lost), was placing the words in a spiral from the centre outwards. This really helped with both the placement algorithm to get a “cloud” shape and also the visual appeal and pseudo-randomness – considering people don’t like really random things.

Another technique I borrowed from my years as a Windows C/CPP programmer in the “multimedia” days, was bit-blitting and double/triple buffering. Now this was a pleasant Canvas surprise as bitmap operations were pretty new to Flash at the time, and felt generally impossible on the web. The operations used to test whether words were overlapping involved some visually distressing artefacts with masks and colour inversions and so on, so I needed to do that stuff off-screen. Also for performance purposes I only needed to scan the intersecting bounding-boxes of the words, so copying small areas to a secondary canvas for collision detection was much more efficient. Fortunately Canvas allows you to do these kind of raster operations (browser willing) even though it’s mainly a vector-oriented API.

Producing natural looking variations in computer programs often suffers from the previously mentioned problem that true randomness and human perception of randomness are two very different things. People are so good at recognising patterns in noisy environments, that you have to purposely smooth out random clusters to avoid people having religious crises.

During this project I produced a couple of interesting random variants which I simply couldn’t find in the public domain at the time. The randomisation I developed is based around the normal distribution (bell curve) and cut-off around three standard-deviations to prevent wild outliers, instead of at the usual min-max. The problem with typical random numbers over many iterations is you get a “flat line” of equal probabilities between the min and max, like a square plateau. This isn’t normal! Say if your minimum is 5 and max is 10, over time you’ll get many 5.01 but never a single 4.99. In reality in life, everything is a normal distribution! Really you want to ask an RNG for a centre-point, and a STDEV to give the spread. I was pretty surprised (after coming up with the idea) that I couldn’t find anything, in any language to implement it. I’d been working on government-certified RNGs recently, and had even interfaced with radioactive-decay-driven RNGs in my youth, so believed I was relatively well versed in the topic. So I reached for my old maths text books and did it myself – with some tests – and visualisations of course!

Having bell-curve weighted random numbers really helped give a soothing natural feel to the “jumbliness” of the words and to the spread of the generated colour palettes. It’s an effect that’s difficult to describe – it has to be seen (A/B tested) to be appreciated. I wonder if they are secretly used in other areas of human-computer relations.

Performance was one of the biggest, or longest challenges. In fact it never ended. I was never totally happy with how hot my laptop got on really big clouds, with all the rendering options turned on. Built into the library are some performance statistics and – you guessed it – meta-visualisation tools in the form of histograms of processing time over word size.

I also experimented with sub-pixel vs. whole-pixel rendering but didn’t find the optimisation gains some people swore by, when rounding to true pixels.

After a lot of hair pulling, there were some really fun moments when a sudden head-slap moment lead to a reworking of the collision detection algorithm (the main CPU hog) which gave us huge a leap in performance. I’m sure there’s still many optimisations to make, and I’d be happy to accept any input, hence why I’ve open sourced it after all this time.

While tag clouds may be the Mullets of the Internet, programming them almost certainly contributes to baldness.